Artificial intelligence may not have a medical degree, but new Canadian research suggests it can often sound more convincing than real doctors — sometimes with dangerous consequences.

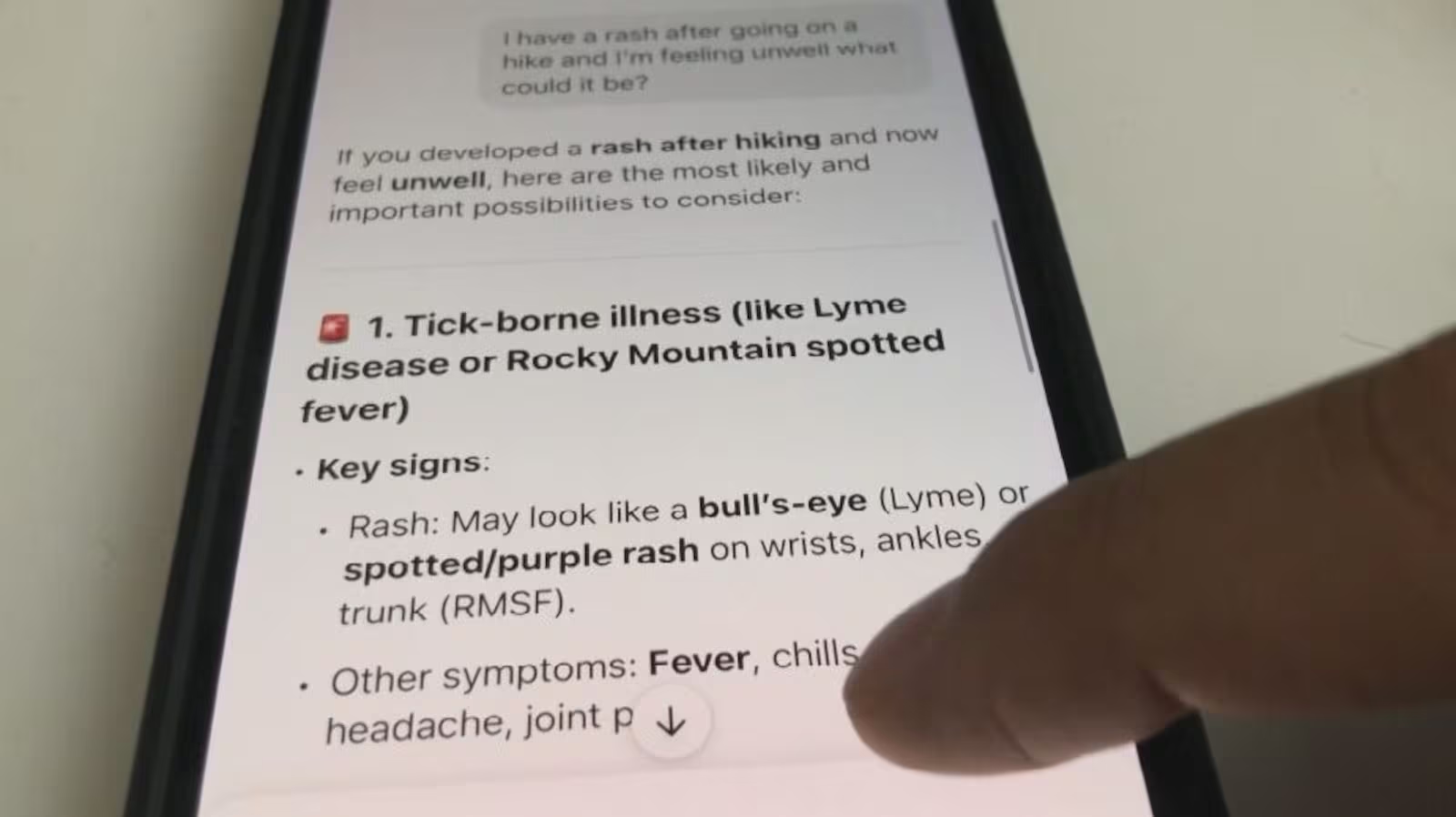

A study by researchers at the University of British Columbia (UBC) has found that AI tools such as ChatGPT not only offer medical information readily but also deliver it in a tone and style that patients find more persuasive and empathetic than human health professionals. This effect can lead patients to trust — and act on — inaccurate medical advice.

“The conversations with large language models were more persuasive than the ones with people,” said Dr. Vered Shwartz, UBC assistant professor of computer science and author of Lost in Automatic Translation. “They were also considered more empathetic.”

Shwartz explained that the human-like tone and confident presentation of AI-generated responses make them appear trustworthy. “When it’s written in such an authoritative way and has answers to everything you ask it, of course you assume it knows what it’s talking about,” she said.

Doctors across Canada say they’re already feeling the impact. Dr. Cassandra Stiller-Moldovan, a family physician in Colwood, B.C., says patients are arriving at appointments with their minds made up, citing AI-generated diagnoses or treatment plans. “Oftentimes people get it bang on,” she said. “But other times, you spend a lot of time convincing them that that’s not exactly what’s going on. That education piece takes a lot of time.”

Further research from the University of Waterloo underscores why overconfidence in AI can be risky. In tests using ChatGPT-4, only 31 per cent of its medical answers were entirely correct, suggesting that despite the persuasive tone, these tools get things wrong more often than they get them right.

The Canadian Medical Association (CMA) says the growing reliance on AI is partly a reflection of Canada’s strained health-care system. Millions of Canadians lack access to a family doctor, while long wait times at walk-in clinics and emergency rooms are pushing many toward online tools.

“Part of the reason people go online is that they can’t get the access they deserve,” said Dr. Kathleen Ross, CMA president. “When you’ve had a primary care provider over a period of time, you build a trusting relationship and can start having those difficult discussions.”

For now, experts say AI can be a useful starting point for general health questions — but it’s no substitute for a qualified physician. As Shwartz and her team caution, the technology’s ability to sound confident and compassionate doesn’t make it medically reliable.